If PySpark installation fails on AArch64 due to PyArrow Note for AArch64 (ARM64) users: PyArrow is required by PySpark SQL, but PyArrow support for AArch64 If using JDK 11, set =true for Arrow related features and refer Note that PySpark requires Java 8 or later with JAVA_HOME properly set. To install PySpark from source, refer to Building Spark. To create a new conda environment from your terminal and activate it, proceed as shown below:Įxport SPARK_HOME = ` pwd ` export PYTHONPATH = $( ZIPS =( " $SPARK_HOME "/python/lib/*.zip ) IFS =: echo " $ " ): $PYTHONPATH Installing from Source ¶ Serves as the upstream for the Anaconda channels in most cases). Is the community-driven packaging effort that is the most extensive & the most current (and also The tool is both cross-platform and language agnostic, and in practice, conda can replace bothĬonda uses so-called channels to distribute packages, and together with the default channels byĪnaconda itself, the most important channel is conda-forge, which Traverse to the spark/ conf folder and make a copy of the spark-env.sh. Using Conda ¶Ĭonda is an open-source package management and environment management system (developed byĪnaconda), which is best installed through (On Master only) To setup Apache Spark Master configuration, edit spark-env.sh file. It can change or be removed between minor releases.

#HOW TO INSTALL APACHE SPARK DOWNLOAD#

You can download the latest Spark version, from the Spark official website, with Hadoop pre-built on it.

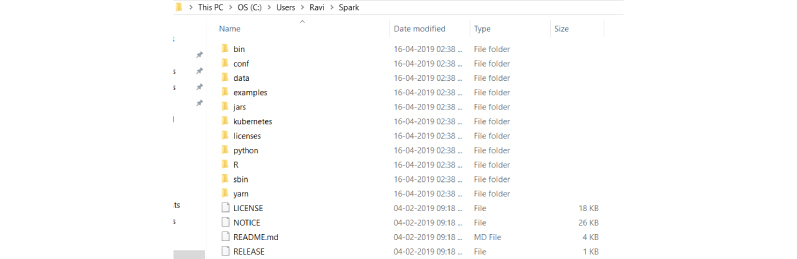

You just have to download Apache Spark and extract the files to your local machine.

#HOW TO INSTALL APACHE SPARK MAC#

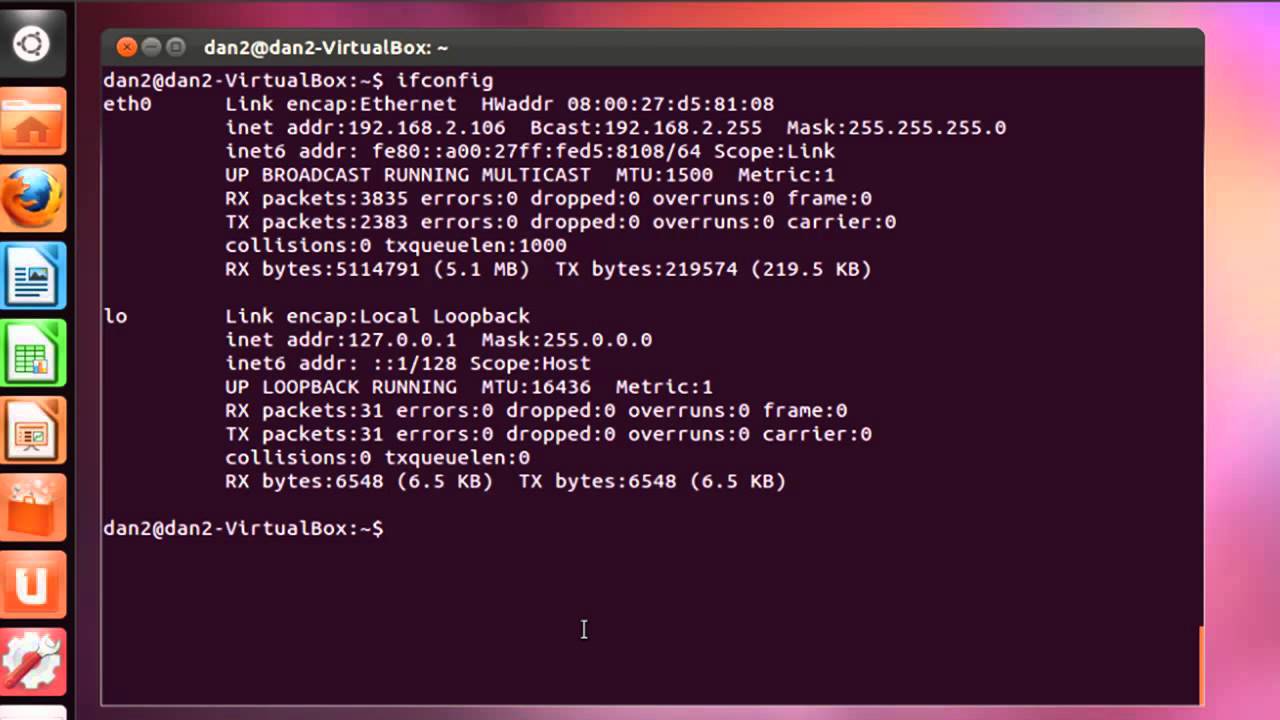

Note that this installation way of PySpark with/without a specific Hadoop version is experimental. Spark supports both Windows and UNIX-like systems, such as Linux, Mac OS, etc. Without: Spark pre-built with user-provided Apache HadoopĢ.7: Spark pre-built for Apache Hadoop 2.7ģ.2: Spark pre-built for Apache Hadoop 3.2 and later (default) Once you are logged in to your server, run the. Connect to your Cloud Server via SSH and log in using the credentials highlighted at the top of the page. Create a new server, choosing Rocky Linux 8 as the operating system with at least 2GB RAM. Supported values in PYSPARK_HADOOP_VERSION are: To download the latest version of mHub - Spark, open a ticket at . First, log in to your Atlantic.Net Cloud Server. PYSPARK_HADOOP_VERSION = 2.7 pip install pyspark -v To download the latest version of mHub - Spark, open a ticket at.